Introduction to HGWR Model

What is HGWR model?

Hierarchical and Geographically Weighted Regression, shorted for HGWR, is a spatial modelling method designed for data of spatial hierarchical structures. Just as its name implies, this is a combination of Hierarchical Linear Model [HLM, also known as Multilevel Model, @Raudenbush-1993] and Geographically Weighted Regression [GWR, @BrunsdonFotheringham-1996]. In this model, spatial effects are divided into three types: global fixed, local fixed and random. Formally, it is expressed as with the dependent variable, the group level independent variables, the local fixed effects, also the group level independent variables, the global fixed effects, the individual level independent variables, the random effects, the individual errors.

Why HGWR model?

As we know, hierarchical structure is commonly existing in spatial data. For example, cities can be grouped by provinces or other higher-level administrative district they belong to; house prices may share some factors from the block; and students in one school have different access to education resources with those in another school. When dealing with this type of data, we usually choose HLM to address the within-group homogeneity and the between-group heterogeneity. And there are usually two types of variables: group-level variables and sample-level variables. The formal ones are used to describe the properties of groups (such as the provinces, blocks and schools); the latter ones are observations of individual samples (such as the cities, houses and students). The effect of some sample-level variables are similar in all groups, thus they are modelled with fixed coefficients (effects). For others, they are modelled individually, i.e., with random effects.

However, for group-level variables, they can only be modelled with fixed effects. For spatial data, we would encounter some problems. According to the Tobler’s first law of Geography “Everything is related to everything else, but near things are more related than distant things” [@Tobler-1970]. If the model is calibrated with equally weighted samples, spatial heterogeneity would be overlooked [@FotheringhamBrunsdon-2002]. Thus, it requires us to distinguish “local fixed effects” from “global fixed effects” to discover spatial heterogeneity in group-level variables.

But why not GWR or Multiscale GWR [@FotheringhamYang-2017, LuBrunsdon-2017] Because when dealing with data of hierarchical structures, GWR is problematic [@HuLu-2022]. We know that GWR calibrate a model with unique coefficients on each sample by borrowing data from its neighbours. And it uses a parameter “bandwidth” to control how many neighbours are included. If samples are not hierarchically structured, everything works well. However, just imagine a situation like Figure 1. For the two samples of red color and blue color, we take the same number of their neighbours, but actually the spatial extents are not the same. In extreme cases, spatial extends of some samples could be too small to hold more than one or two location, but some are large enough. This would lead to the failure of bandwidth optimization and reduce the reliability of the optimized bandwidth.

To solve the problems mentioned above, we need to use HGWR model. It is able to modelling spatial hierarchical structure and spatial heterogeneity simultaneously. Examples below can show that it works well for spatial hierarchical data.

Modelling with HGWR Model

The R package hgwrr is built for calibrating HGWR model. In this section, we are going to show how to use it.

Installation

Package hgwrr is available on CRAN. Simply type the following codes to install it.

install.packages("hgwrr")Or download latest released source package and run the following command to install this package.

Note that RTools is required on Windows.

Usage

We are going to show the usage of hgwrr package with a simulated data.

First, we need to load this package in an R session.

library(hgwrr)

#> Loading required package: sf

#> Linking to GEOS 3.10.2, GDAL 3.4.1, PROJ 8.2.1; sf_use_s2() is TRUE

#> Loading required package: MASSThen we can calibrate an HGWR model via hgwr()

function.

hgwr(

formula, data, coords, bw,

alpha = 0.01, eps_iter = 1e-06, eps_gradient = 1e-06, max_iters = 1e+06,

max_retries = 10, ml_type = HGWR_ML_TYPE_D_ONLY, verbose = 0

)The first five arguments are mandatory.

-

formulaaccepts a formula object in R. Its format follows lme4 package. As there are three types of effects: GLSW effects, fixed effects and SLR effects, we use the following format to specify both of them:dependent ~ L(glsw) + fixed + (slr | group) dataaccepts adata.frameor asfobject in R. All variables specified informulaare extracted fromdata.(Only needed when

datais of typedata.frame)coordsaccepts a matrix of 2 columns. Each row is the longitude and latitude of each group.bwaccepts a integer or numeric number to specify the bandwidth used in geographically weighted process. Currently it can only be adaptive bandwidth.

Other arguments are optional which is used to control the backfitting

maximum likelihood algorithm. On most occasions the default values are

fine. If the default values cause some problems and you want change some

of them, please check the documentation of function hgwr()

for more information.

Example: A Small Simulated Data Set

This example is used to show the usage of this package and to test whether it works. We don’t care about how good the fitness of this model is with this data set.

A data set “mulsam.test” is provided with this package,

data(mulsam.test)

head(mulsam.test$data)

#> y g1 g2 x1 z1 group

#> 1 3.0943882 0.0443617 -0.0486926 0.9265996 0.4943128 1

#> 2 3.5794558 0.0443617 -0.0486926 0.5094696 1.3079015 1

#> 3 7.8495496 0.0443617 -0.0486926 1.6624003 1.4970410 1

#> 4 2.3392806 0.0443617 -0.0486926 -0.3305220 0.8147027 1

#> 5 0.1267381 0.0443617 -0.0486926 1.5099312 -1.8697888 1

#> 6 -2.1884856 0.0443617 -0.0486926 -1.9505113 0.4820295 1

head(mulsam.test$coords)

#> u v

#> 1 0 9

#> 2 2 19

#> 3 3 17

#> 4 4 2

#> 5 5 10

#> 6 6 1where y is the dependent variable, g1 and

g2 are two group-level variables, z1 and

x1 are two sample-level variables, group are

the labels of the groups they belong to, and U,

V are longitude and latitude coordinate values of all

groups.

We regards g1 and g2 have local fixed

effects, x1 have global fixed effects and z1

have random effects. Then we can calibrate an HGWR model with like

this

ms_hgwr <- hgwr(

formula = y ~ L(g1 + g2) + x1 + (z1 | group),

data = mulsam.test$data,

coords = mulsam.test$coords,

bw = 10, alpha = 1e-16

)

ms_hgwr

#> Hierarchical and geographically weighted regression model

#> =========================================================

#> Formula: y ~ L(g1 + g2) + x1 + (z1 | group)

#> Method: Back-fitting and Maximum likelihood

#> Data: mulsam.test$data

#>

#> Fixed Effects

#> -------------

#> Intercept x1

#> 1.852190 1.967644

#>

#> Group-level Spatially Weighted Effects

#> --------------------------------------

#> Bandwidth: 10 (nearest neighbours)

#>

#> Coefficient estimates:

#> Coefficient Min 1st Quartile Median 3rd Quartile Max

#> Intercept -0.549094 -0.439522 -0.151433 -0.024133 0.178044

#> g1 0.909293 1.253143 1.692616 1.927313 2.310056

#> g2 1.083410 1.279953 1.415744 1.594576 1.693768

#>

#> Sample-level Random Effects

#> ---------------------------

#> Groups Name Std.Dev. Corr

#> group Intercept 1.033171

#> z1 1.033171 0.000000

#> Residual 1.033171

#>

#> Other Information

#> -----------------

#> Number of Obs: 873

#> Groups: group , 25The output of the model shows estimations of global fixed effects, summary of those of local fixed effects. Also there are the standard deviations of random effects and correlation coefficients between them.

Then we can have a look on the coefficient estimations.

coef(ms_hgwr)

#> Intercept g1 g2 x1 z1

#> 1 0.9233672 2.3100563 1.623094 1.967644 1.817155

#> 2 1.1355688 1.9273133 1.623457 1.967644 2.305716

#> 3 1.8948376 1.9681553 1.625849 1.967644 2.251604

#> 4 1.1327246 2.2656619 1.536906 1.967644 1.590994

#> 5 1.7883252 2.1834680 1.594576 1.967644 1.698679

#> 6 0.7060594 2.0168951 1.399640 1.967644 1.855324

#> 7 1.0109699 1.9266816 1.355484 1.967644 1.105044

#> 8 1.7495857 1.8658473 1.670593 1.967644 1.021006

#> 9 0.8418062 1.8149658 1.279953 1.967644 2.980240

#> 10 1.3535790 1.9201790 1.362606 1.967644 2.081303

#> 11 1.0394353 1.9399671 1.480825 1.967644 1.999491

#> 12 1.8167418 1.8022871 1.693768 1.967644 2.303986

#> 13 2.4239639 1.5126602 1.612656 1.967644 1.471564

#> 14 0.5334871 1.6926159 1.237340 1.967644 1.676205

#> 15 1.3043111 1.6881432 1.264833 1.967644 1.454624

#> 16 1.3370861 1.5843740 1.238710 1.967644 2.374940

#> 17 1.5039024 1.5804393 1.525840 1.967644 1.042542

#> 18 2.0337854 1.2531427 1.376711 1.967644 1.454729

#> 19 1.9575950 1.2613191 1.415744 1.967644 2.718663

#> 20 2.5267858 1.2438959 1.500855 1.967644 1.902474

#> 21 1.4363110 1.1675786 1.354417 1.967644 1.672610

#> 22 2.6255900 1.0711151 1.543638 1.967644 3.185923

#> 23 2.6121111 0.9099629 1.168313 1.967644 1.382425

#> 24 2.2433299 0.9092926 1.083410 1.967644 1.755667

#> 25 3.1624476 0.9708449 1.183679 1.967644 1.635566With ggplot2 or other packages, we can create some figures.

library(ggplot2)

#> Error in get(paste0(generic, ".", class), envir = get_method_env()) :

#> object 'type_sum.accel' not found

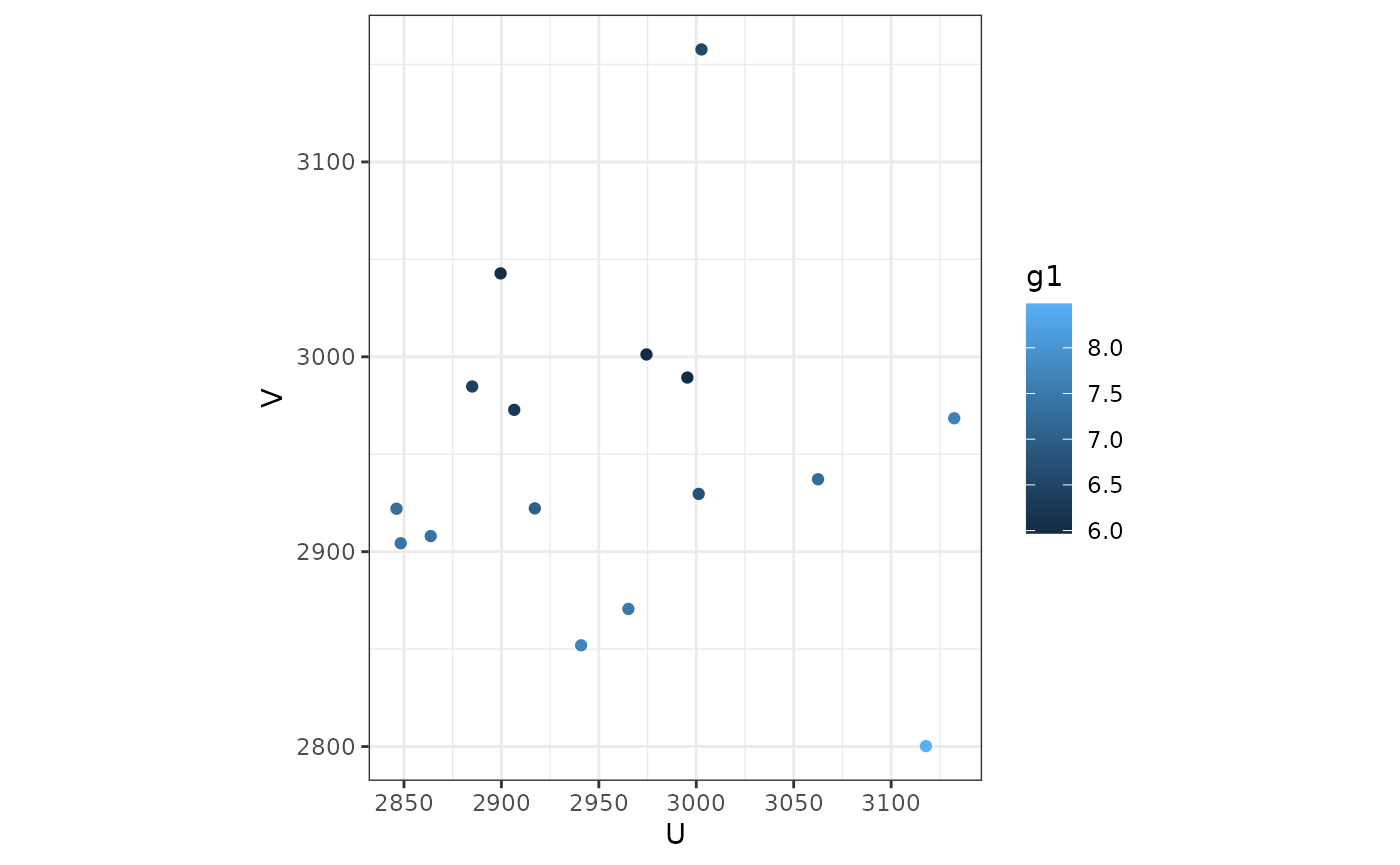

ms_hgwr_coef <- as.data.frame(cbind(mulsam.test$coords, coef(ms_hgwr)))

ggplot(ms_hgwr_coef, aes(u, v, color = g1)) + geom_point() +

coord_fixed() + theme_bw()

We can also convert it to spatial data and use tmap to visualize.

library(sf)

library(tmap)

#> Breaking News: tmap 3.x is retiring. Please test v4, e.g. with

#> remotes::install_github('r-tmap/tmap')

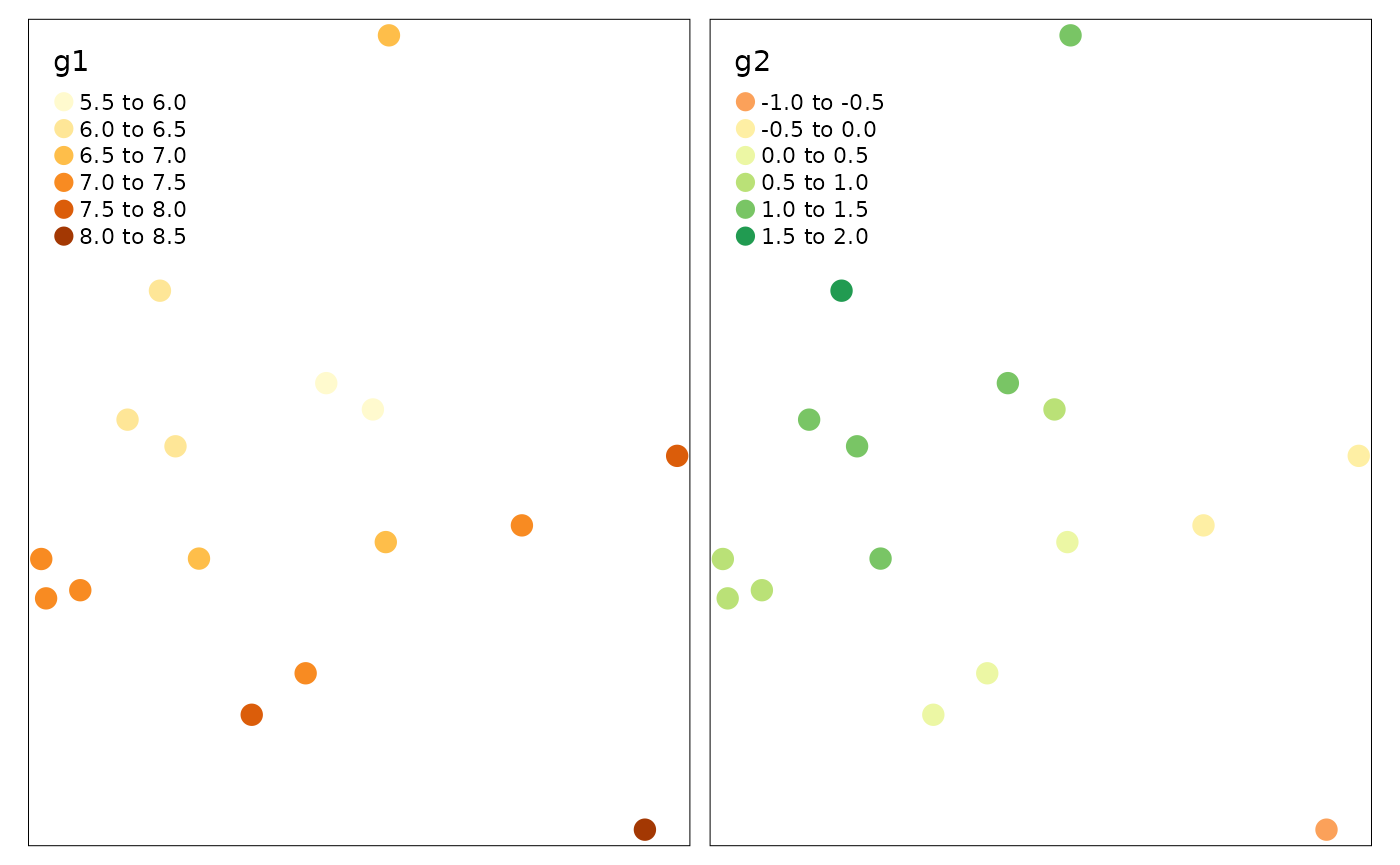

ms_hgwr_coef_sf <- st_as_sf(ms_hgwr_coef,

coords = names(mulsam.test$coords),

crs = 27700)

tm_shape(ms_hgwr_coef_sf) + tm_dots(col = c("g1", "g2"), size = 0.5)

And we can also fetch the fitted and residuals.

head(data.frame(

real = mulsam.test$data$y,

fitted = fitted(ms_hgwr),

residuals = residuals(ms_hgwr)

))

#> real fitted residuals

#> 1 3.0943882 3.668274 -0.5738854

#> 2 3.5794558 4.325927 -0.7464712

#> 3 7.8495496 6.938180 0.9113695

#> 4 2.3392806 1.776904 0.5623768

#> 5 0.1267381 0.520124 -0.3933859

#> 6 -2.1884856 -2.015177 -0.1733083The summary() function will give some statistical

information about this model.

summary(ms_hgwr)

#> Hierarchical and geographically weighted regression model

#> =========================================================

#> Formula: y ~ L(g1 + g2) + x1 + (z1 | group)

#> Method: Back-fitting and Maximum likelihood

#> Data: mulsam.test$data

#>

#> Parameter Estimates

#> -------------------

#> Fixed effects:

#> Estimated Sd. Err t.val Pr(>|t|)

#> Intercept 1.852190 0.203079 9.120541 0.000000 ***

#> x1 1.967644 0.033827 58.168539 0.000000 ***

#>

#> Bandwidth: 10 (nearest neighbours)

#>

#> GLSW effects:

#> Mean Est. Mean Sd. *** ** * .

#> Intercept -0.208441 0.247059 0.0% 0.0% 4.0% 24.0%

#> g1 1.631474 1.795246 0.0% 0.0% 0.0% 0.0%

#> g2 1.430116 1.476570 0.0% 0.0% 0.0% 0.0%

#>

#> SLR effects:

#> Groups Name Mean Std.Dev. Corr

#> group Intercept 0.000000 1.033171

#> z1 1.869539 1.033171 0.000000

#> Residual 0.079964 1.033171

#>

#>

#> Diagnostics

#> -----------

#> rsquared 0.905207

#> logLik -118.467314

#> AIC 261.985064

#>

#> Scaled Residuals

#> ----------------

#> Min 1Q Median 3Q Max

#> -3.416380 -0.584726 0.092501 0.725766 3.028003

#>

#> Other Information

#> -----------------

#> Number of Obs: 873

#> Groups: group , 25On the current stage, only pseudo is available. In the future, more diagnostic information will be provided in this package.

Example: Large Scale Simulated Data

In the former example, there are only 873 observations and 25 groups.

They are not adequate enough to get precises estimations. Here, we are

going to use a large scale simulated data set to show the performance of

HGWR model. As the true value of coefficients are already known (stored

in variable msl_beta), closeness between estimated and true

values is an practical performance metric.

The data set is provided here. Its

structure is similar to data mulsam.test.

data(multisampling)

head(multisampling$data)

#> y g1 g2 x1 z1 group

#> 1 4.274065 -0.1541009 0.1088925 3.1685285 -0.6264538 1

#> 2 0.952104 -0.1541009 0.1088925 -0.0310156 0.1836433 1

#> 3 -2.277711 -0.1541009 0.1088925 -1.0921285 -0.8356286 1

#> 4 1.210245 -0.1541009 0.1088925 -0.9828463 1.5952808 1

#> 5 4.546979 -0.1541009 0.1088925 1.7193320 0.3295078 1

#> 6 -5.204828 -0.1541009 0.1088925 -1.8466449 -0.8204684 1

head(multisampling$coords)

#> u v

#> 1 0 0

#> 2 0 1

#> 3 0 2

#> 4 0 3

#> 5 0 4

#> 6 0 5

msl_beta <- multisampling$beta

head(msl_beta)

#> Intercept g1 g2 x1 z1

#> 1 0.3495151 0 0.2519548 2 2.463117

#> 2 0.4287200 0 0.3142063 2 2.633550

#> 3 0.3296451 0 0.3843869 2 1.252887

#> 4 0.2671497 0 0.4613005 2 1.905223

#> 5 0.4647590 0 0.5430762 2 1.908053

#> 6 0.2715478 0 0.6271901 2 1.425105In this data, we also regards g1 and g2 as

two group-level variables, z1 and x1 as two

sample-level variables, group as the labels of the groups

they belong to, and U, V as longitude and

latitude coordinate values of all groups. Then we calibrate an HGWR

model.

As the data is large (21434 observations), it may take some time to get results.

msl_hgwr <- hgwr(

formula = y ~ L(g1 + g2) + x1 + (z1 | group),

data = multisampling$data,

coords = multisampling$coords,

bw = 128, kernel = "bisquared", alpha = 1e-16

)

msl_hgwr

#> Hierarchical and geographically weighted regression model

#> =========================================================

#> Formula: y ~ L(g1 + g2) + x1 + (z1 | group)

#> Method: Back-fitting and Maximum likelihood

#> Data: multisampling$data

#>

#> Fixed Effects

#> -------------

#> Intercept x1

#> 1.993571 2.013951

#>

#> Group-level Spatially Weighted Effects

#> --------------------------------------

#> Bandwidth: 128 (nearest neighbours)

#>

#> Coefficient estimates:

#> Coefficient Min 1st Quartile Median 3rd Quartile Max

#> Intercept -1.365347 -0.546013 -0.000998 0.568186 1.408843

#> g1 0.383958 1.101335 1.560858 2.425391 3.599753

#> g2 0.777366 1.371943 1.751608 2.461359 3.572902

#>

#> Sample-level Random Effects

#> ---------------------------

#> Groups Name Std.Dev. Corr

#> group Intercept 1.037299

#> z1 1.037299 0.000000

#> Residual 1.037299

#>

#> Other Information

#> -----------------

#> Number of Obs: 21434

#> Groups: group , 625Fitness Assessment

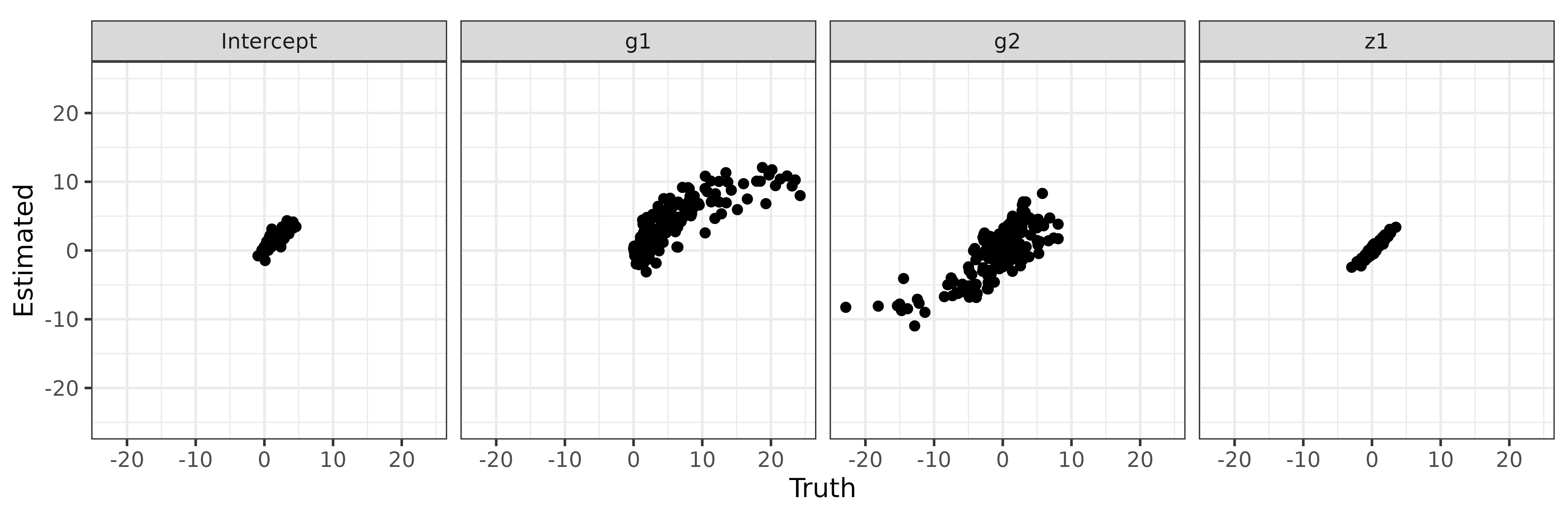

Then we check the estimations of intercept, g1,

g2 and z1 via some scatter plots.

library(dplyr)

#>

#> Attaching package: 'dplyr'

#> The following object is masked from 'package:MASS':

#>

#> select

#> The following objects are masked from 'package:stats':

#>

#> filter, lag

#> The following objects are masked from 'package:base':

#>

#> intersect, setdiff, setequal, union

library(purrr)

coef(msl_hgwr) %>%

select(Intercept, g1, g2, z1) %>%

list(label = names(.), Truth = msl_beta[names(.)], Estimated = .) %>%

pmap_df(data.frame) %>%

mutate(label = factor(label, levels = c("Intercept", "g1", "g2", "z1"))) %>%

ggplot(aes(x = Truth, y = Estimated)) + geom_point() +

coord_fixed() + scale_y_continuous(limits = c(-25, 25)) +

facet_grid(cols = vars(label)) +

theme_bw()

In addition to these scatter plots, root mean squared errors (RMSE) and mean absolute errors of estimations and true values are also very useful to assess the fitting performance.

msl_hgwr_err <- coef(msl_hgwr) %>%

select(Intercept, g1, g2, z1, x1) %>% {

as.data.frame(rbind(

MAE = map2_dbl(msl_beta, ., ~ mean(abs(.x - .y))),

RMSE = map2_dbl(msl_beta, ., ~ sqrt(mean((.x - .y)^2)))

))

}

msl_hgwr_err

#> Intercept g1 g2 x1 z1

#> MAE 0.1677028 0.4417195 0.3350320 0.4179894 0.3952250

#> RMSE 0.2127406 0.5485650 0.4073874 0.5191893 0.4937104Comparison of HGWR, GWR and HLM

As a comparison, we can also calibrate a GWR model and HLM model and have a look at their fitting performance. The GWR model can be calibrated with the following codes.

enable_parallel = Sys.info()['sysname'] != 'Darwin'

parallel_method = ifelse(enable_parallel, "omp", FALSE)

parallel_arg = ifelse(enable_parallel, 0, NA)

### GWR model

library(GWmodel)

#> Loading required package: robustbase

#> Loading required package: sp

#> Loading required package: Rcpp

#> Welcome to GWmodel version 2.4-2.

msl_gwr_data <- multisampling$data

coordinates(msl_gwr_data) <- with(multisampling, coords[data$group, ])

##### Get optimized bandwidth via golden minimization algorithm.

msl_gwr_bw <- 265 # The bandiwdth is pre-optimised to save time.

##### Calibrate GWR model with optimized bandwidth.

msl_gwr <- gwr.basic(

formula = y ~ g1 + g2 + x1 + z1,

data = msl_gwr_data,

bw = msl_gwr_bw,

adaptive = TRUE,

parallel.method = parallel_method,

parallel.arg = parallel_arg

)

#### Get coefficient estimations.

#### As samples in one group have equal estimations,

#### we use mean value of each group to represent them.

msl_gwr_coef <- cbind(msl_gwr$SDF@data, group = msl_gwr_data$group) %>%

select(Intercept, g1, g2, x1, z1, group) %>%

group_by(group) %>%

summarise_all(mean)

##### Calculate RMSE and MAE of estimations.

msl_gwr_err <- msl_gwr_coef %>%

select(Intercept, g1, g2, z1, x1) %>% {

as.data.frame(rbind(

MAE = map2_dbl(msl_beta, ., ~ mean(abs(.x - .y))),

RMSE = map2_dbl(msl_beta, ., ~ sqrt(mean((.x - .y)^2)))

))

}

msl_gwr_err

#> Intercept g1 g2 x1 z1

#> MAE 0.1827751 1.024012 0.9715697 0.2332346 0.3964786

#> RMSE 0.2448687 1.532527 1.4101019 0.2964659 0.4994751And also a HLM model calibrated with the following codes.

library(lme4)

#> Loading required package: Matrix

msl_hlm <- lmer(

formula = y ~ g1 + g2 + x1 + (z1 | group),

data = multisampling$data

)

msl_hlm_coef <- coef(msl_hlm)$group

colnames(msl_hlm_coef)[which(colnames(msl_hlm_coef) == "(Intercept)")] <- "Intercept"

msl_hlm_err <- msl_hlm_coef %>%

select(Intercept, g1, g2, z1, x1) %>% {

as.data.frame(rbind(

MAE = map2_dbl(msl_beta, ., ~ mean(abs(.x - .y))),

RMSE = map2_dbl(msl_beta, ., ~ sqrt(mean((.x - .y)^2)))

))

}

msl_hlm_err

#> Intercept g1 g2 x1 z1

#> MAE 0.2454883 1.073194 0.8378061 0.4266994 0.3952242

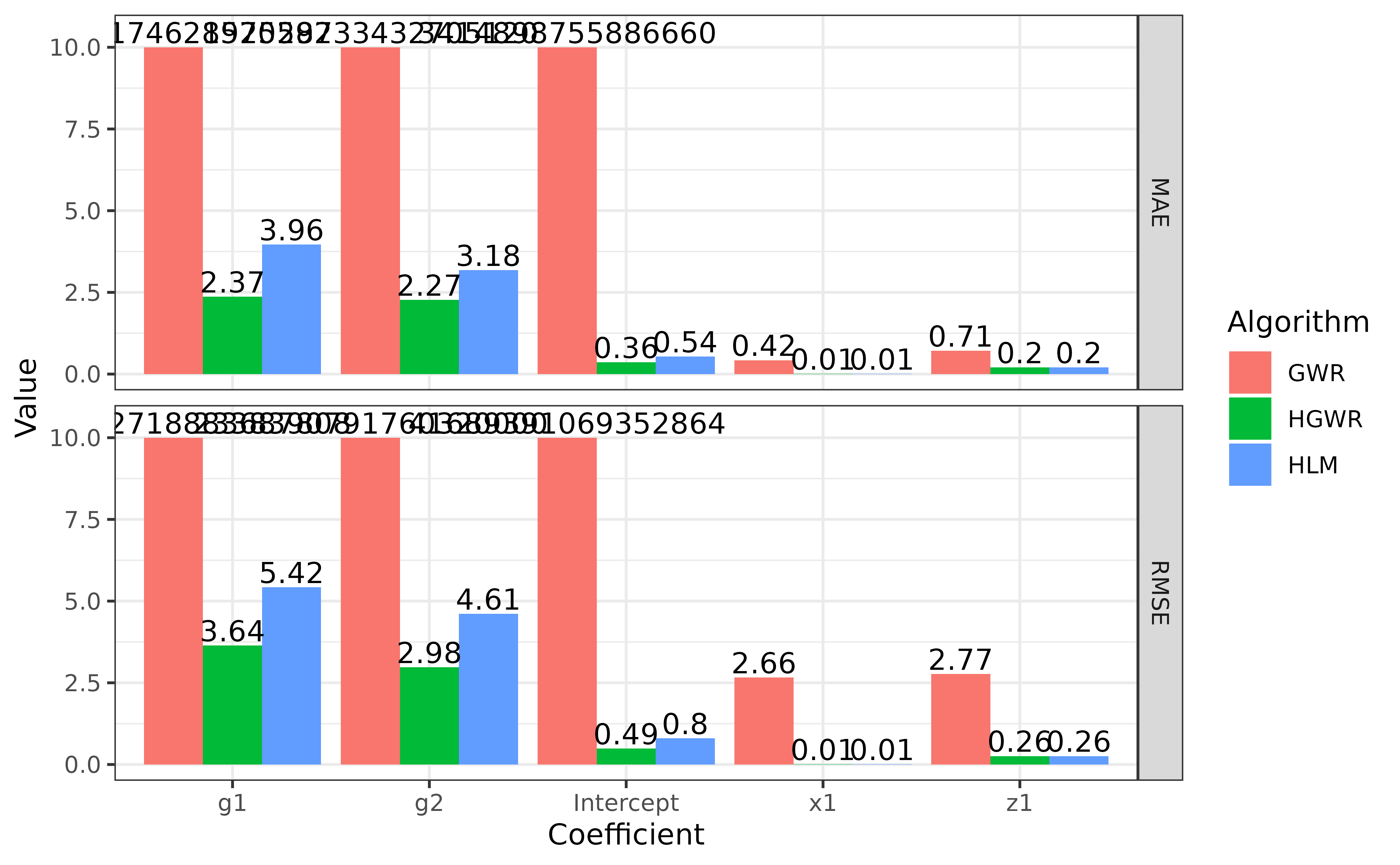

#> RMSE 0.3279763 1.237473 0.9856770 0.5300526 0.4937105A bar plot will be helpful here to compare the fitness of these three models.

list(HGWR = msl_hgwr_err, GWR = msl_gwr_err, HLM = msl_hlm_err) %>%

map2_dfr(., names(.), function(x, nx) {

map2_dfr(x, colnames(x), function(i, ni) {

data.frame(Coefficient = ni, Label = rownames(x), Value = i)

}) %>% cbind(Algorithm = nx, .)

}) %>%

ggplot(aes(x = Coefficient, y = Value, group = Algorithm, fill = Algorithm)) +

geom_col(position = "dodge") +

geom_text(aes(label = round(Value, 2), y = pmin(Value, 10), vjust = -0.2),

position = position_dodge(0.9)) +

facet_grid(rows = vars(Label)) +

scale_y_continuous(limits = c(0, 10), oob = scales::squish,

expand = expansion(add = c(0.5, 1))) +

theme_bw()

Note that in this figure we limited the scale of y axis to make the bar for

x1andz1more obvious. The actual numbers are labelled above bars.

We can say that HGWR has best fitness among these three models. It is able to give more precise estimations for local fixed effects. And it can estimate global fixed effects and also random effects as precisely as HLM does, but more precisely than GWR does.

Case Study: Impact Factors of House Price in Wuhan

As the fitness of HGWR has been demonstrated with simulation data, we

are thing about applying it in collected data sets. Here we are going to

use a case study to show the usage of HGWR on this type of data. The

data set is provided as wuhan.hp by this package.

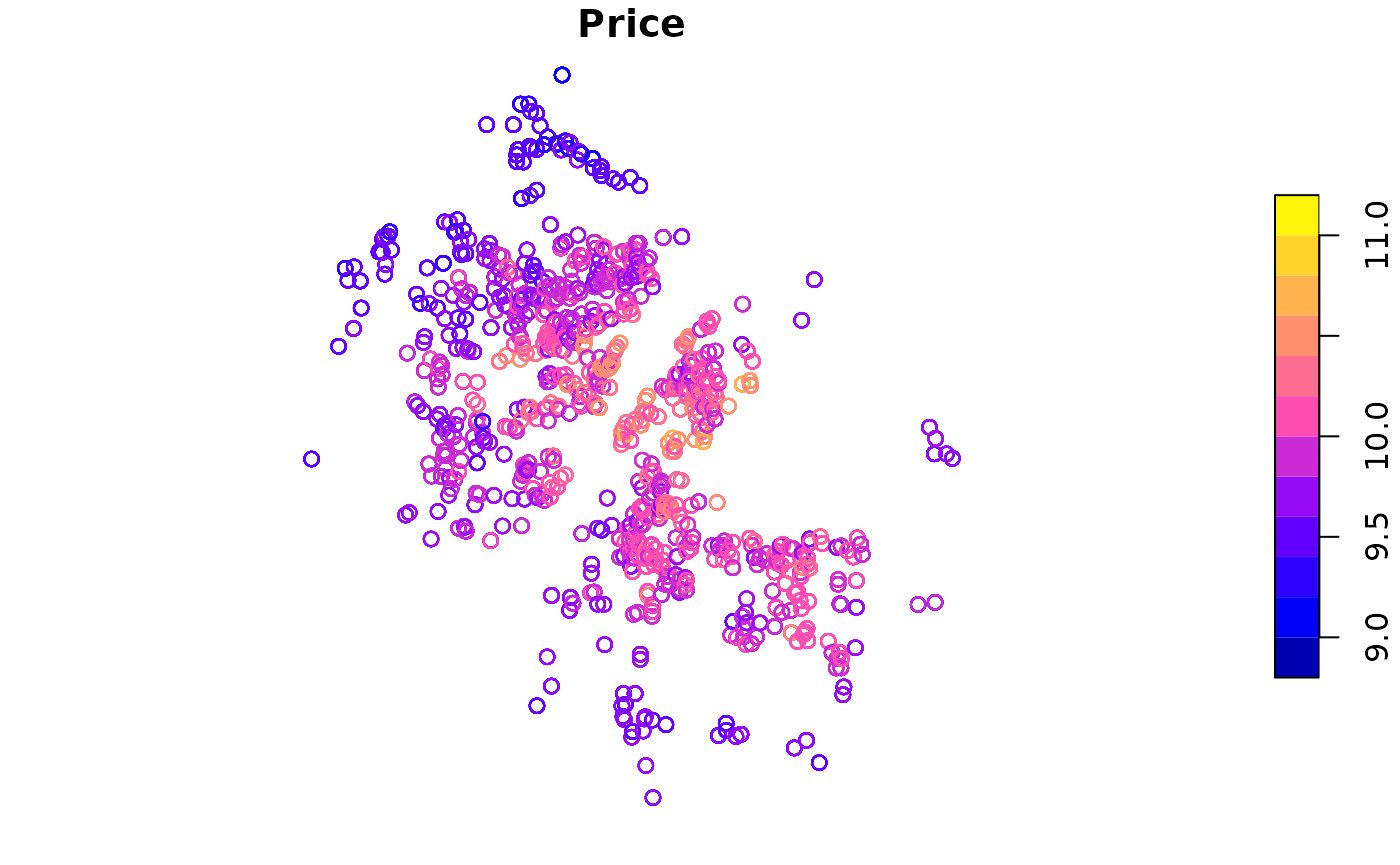

This data set, collected in 2018, has 19599 properties located in 19599 communities. In each observation, there are fields of house properties, locational and neighbourhood variables. In details, they are distances to nearby educational resources (kindergartens, primary schools, middle schools, high schools, universities), commercial areas (business districts, shopping malls and supermarkets), transportation facilities (metro stations and bus stations), rivers, lakes, and green lands. The following figure shows the distribution of these properties, and the table gives information about all available variables.

| Variables | Value | Level | Type | Logarithm |

|---|---|---|---|---|

| Price | House price per square metre. | Sample | Dependent | Yes |

| Floor.High | 1 if a property is on a high floor, otherwise 0. | Sample | Structural | |

| Floor.Low | 1 if a property is on a low floor, otherwise 0. | Sample | Structural | |

| Decoration.Fine | 1 if a property is well decorated, otherwise 0. | Sample | Structural | |

| PlateTower | 1 if a property is of the plate-tower type, otherwise 0. | Sample | Structural | |

| Steel | 1 if a property is of ‘steel’ structure, otherwise 0. | Sample | Structural | |

| BuildingArea | Building area in square metres. | Sample | Structural | Yes |

| Fee | Management fee per square meter per month. | Group | Neighbourhood | Yes |

| d.Commercial | Distance to the nearest commercial area. | Group | Locational | Yes |

| d.Greenland | Distance to the nearest green land. | Group | Locational | Yes |

| d.Water | Distance to the nearest river or lake. | Group | Locational | Yes |

| d.University | Distance to the nearest university. | Group | Locational | Yes |

| d.HighSchool | Distance to the nearest high school. | Group | Locational | Yes |

| d.MiddleSchool | Distance to the nearest middle school. | Group | Locational | Yes |

| d.PrimarySchool | Distance to the nearest primary school. | Group | Locational | Yes |

| d.Kindergarten | Distance to the nearest kindergarten. | Group | Locational | Yes |

| d.SubwayStation | Distance to the nearest subway station. | Group | Locational | Yes |

| d.Supermarket | Distance to the nearest supermarket. | Group | Locational | Yes |

| d.ShoppingMall | Distance to the nearest shopping mall. | Group | Locational | Yes |

A variable selection process [@HuLu-2022] suggests us to use the following variables to build a HGWR model:

- Fee

- d.Water

- d.Commercial

- d.PrimarySchool

- d.Kindergarten

- BuildingArea

- Floor.High

But we are going to append a variable d.ShoppingMall which is usually concerned by customers and researchers. And regards the locational and Fee as local fixed effects, the BuildingArea as global fixed effects and Floor.High as random effects. Then we can calibrate a HGWR model with the following codes.

whhp_hgwr <- hgwr(

formula = Price ~ L(d.Water + d.Commercial + d.Kindergarten + d.PrimarySchool) +

Fee + BuildingArea + d.ShoppingMall + (Floor.High | group),

data = wuhan.hp,

bw = "CV"

)

whhp_hgwr

#> Hierarchical and geographically weighted regression model

#> =========================================================

#> Formula:

#> Price ~ L(d.Water + d.Commercial + d.Kindergarten + d.PrimarySchool) +

#> Fee + BuildingArea + d.ShoppingMall + (Floor.High | group)

#> Method: Back-fitting and Maximum likelihood

#> Data: wuhan.hp

#>

#> Fixed Effects

#> -------------

#> Intercept Fee BuildingArea d.ShoppingMall

#> 9.905824 0.176803 -0.020361 -0.002869

#>

#> Group-level Spatially Weighted Effects

#> --------------------------------------

#> Bandwidth: 562 (nearest neighbours)

#>

#> Coefficient estimates:

#> Coefficient Min 1st Quartile Median 3rd Quartile Max

#> Intercept -0.255465 -0.217436 -0.196229 -0.160231 -0.136330

#> d.Water -2.455753 -2.132142 -1.918264 -1.767405 -1.485196

#> d.Commercial -4.637518 -4.456328 -4.275132 -3.973181 -3.840622

#> d.Kindergarten -0.035458 -0.031036 -0.027930 -0.025192 -0.021800

#> d.PrimarySchool -0.041803 -0.040628 -0.039498 -0.038174 -0.037094

#>

#> Sample-level Random Effects

#> ---------------------------

#> Groups Name Std.Dev. Corr

#> group Intercept 0.151359

#> Floor.High 0.079724 -0.022867

#> Residual 0.081436

#>

#> Other Information

#> -----------------

#> Number of Obs: 19599

#> Groups: group , 776Coefficient Visualization

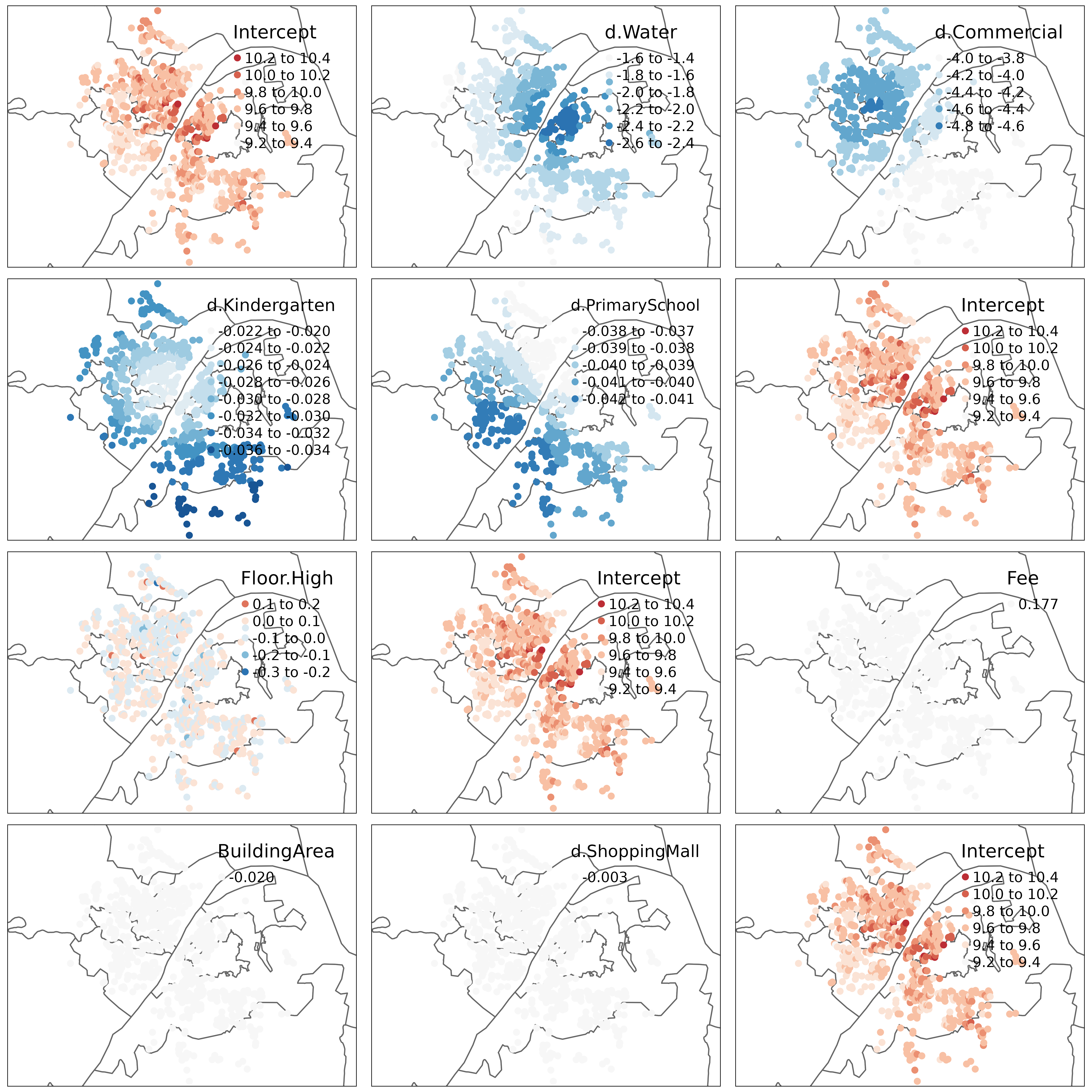

We can also make some maps to visualize the estimated coefficients. Boundary data of Wuhan is provided. It is in GeoJSON format and can be loaded by package rgdal. For coefficients, we can simply combine it with coordinates of houses. Because coefficients, coordinates and groups can be one-to-one matched.

wuhan <- sf::read_sf("https://raw.githubusercontent.com/hpdell/hgwr/docs/data/wuhan.geojson")

wuhan_hp_groups <- st_centroid(aggregate(wuhan.hp, by = list(wuhan.hp$group), mean))

wuhp_coef_sf <- cbind(coef(whhp_hgwr), st_coordinates(wuhan_hp_groups)) %>%

st_as_sf(coords = c("X", "Y"), crs = 4547)

wh_basemap <- tm_shape(wuhan) + tm_polygons(col = "white")Then visualize coefficients estimations with the basemap.

with(whhp_hgwr$effects, c(glsw, slr, fixed, "Intercept")) %>%

map(function(var) {

wh_basemap +

tm_shape(wuhp_coef_sf, is.master = T) +

tm_dots(col = var, size = 0.1, midpoint = 0,

palette = "-RdBu", legend.col.reverse = T)

}) %>%

tmap_arrange(ncol = 3)

From this figure, we can see that as BuildingArea is regarded as global fixed effects, for all samples there is only one estimations. As Floor.High is regarded as random effects, each group has a unique estimation but no spatial relationship can be seen. For local fixed effects, their estimations seems to be locally related. This is suggested by the first law of geography. Besides, spatial heterogeneity is also obvious in their estimations.

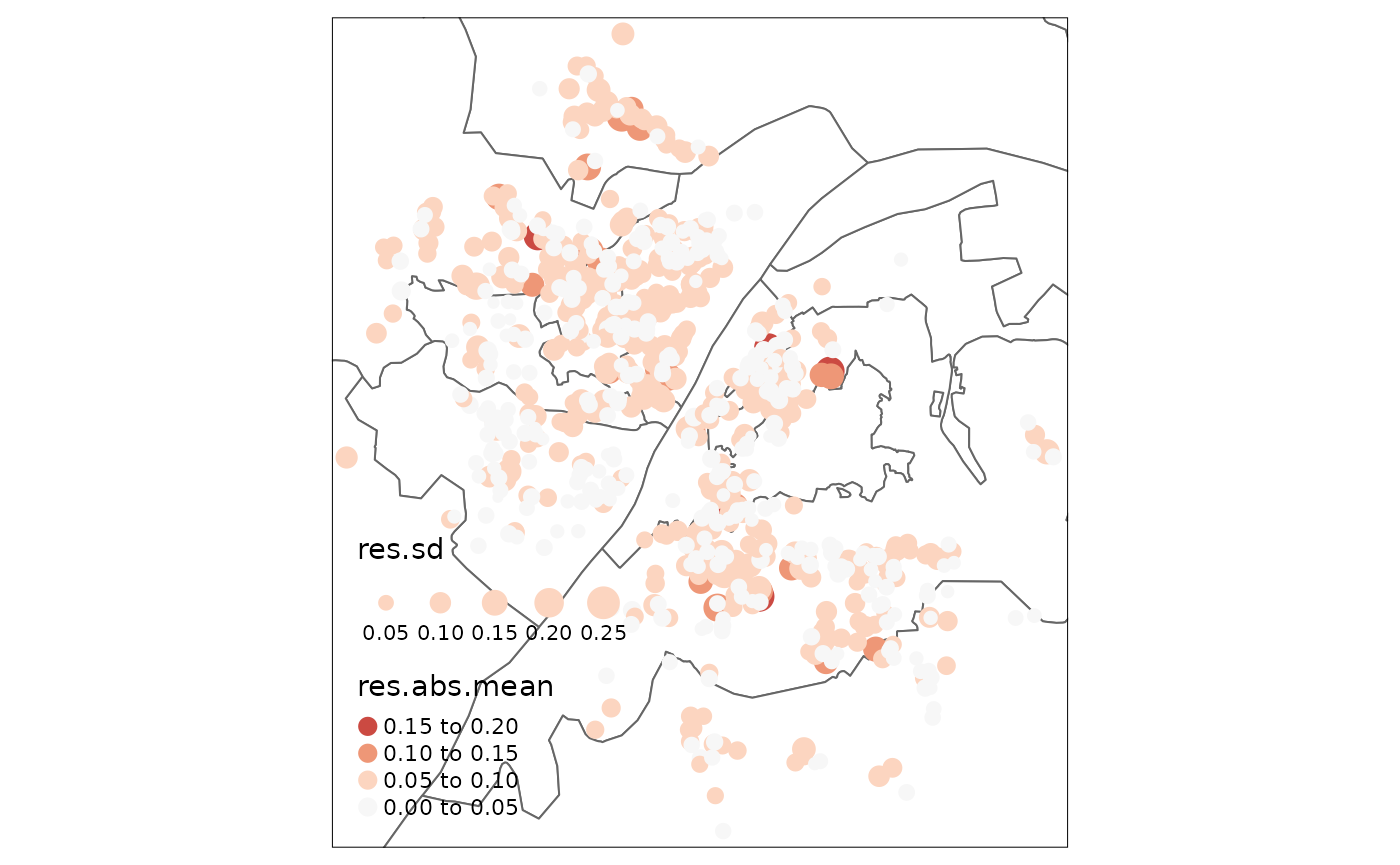

Residual Analysis

Via the standard function residuals(), we have the

access to residuals estimated by this model. Then we can visualize them

by combining the summary statistics of residuals with coordinates. For

example, here we created a map showing mean residual of each group

together with standard deviation.

whhp_hgwr_res <- data.frame(

residuals = residuals(whhp_hgwr),

group = wuhan.hp$group

) %>%

group_by(group) %>%

summarise(res.abs.mean = mean(abs(residuals)),

res.sd = sd(residuals)) %>%

cbind(st_coordinates(wuhan_hp_groups)) %>%

st_as_sf(coords = c("X", "Y"), crs = 4547)

wh_basemap +

tm_shape(whhp_hgwr_res, is.master = T) +

tm_dots(col = "res.abs.mean", size = "res.sd", midpoint = 0,

palette = "-RdBu", legend.col.reverse = T)

And we can also convey a global Moran test on the residuals to to find well estimated points.

library(spdep)

#> Loading required package: spData

#> To access larger datasets in this package, install the spDataLarge

#> package with: `install.packages('spDataLarge',

#> repos='https://nowosad.github.io/drat/', type='source')`

whhp_hgwr_res_listw <- st_coordinates(whhp_hgwr_res) %>%

knearneigh(k = 20) %>% knn2nb() %>% nb2listw() %>% listw2U()

moran.test(whhp_hgwr_res$res.abs.mean, whhp_hgwr_res_listw)

#>

#> Moran I test under randomisation

#>

#> data: whhp_hgwr_res$res.abs.mean

#> weights: whhp_hgwr_res_listw

#>

#> Moran I statistic standard deviate = 6.1948, p-value = 2.918e-10

#> alternative hypothesis: greater

#> sample estimates:

#> Moran I statistic Expectation Variance

#> 0.0639606634 -0.0012903226 0.0001109483From the p-value and Moran’s I value, we can find that although it is suggested to reject the null hypothesis, the global spatial autocorrelation is too weak to take any effects. Additionally, a local Moran test is also useful.

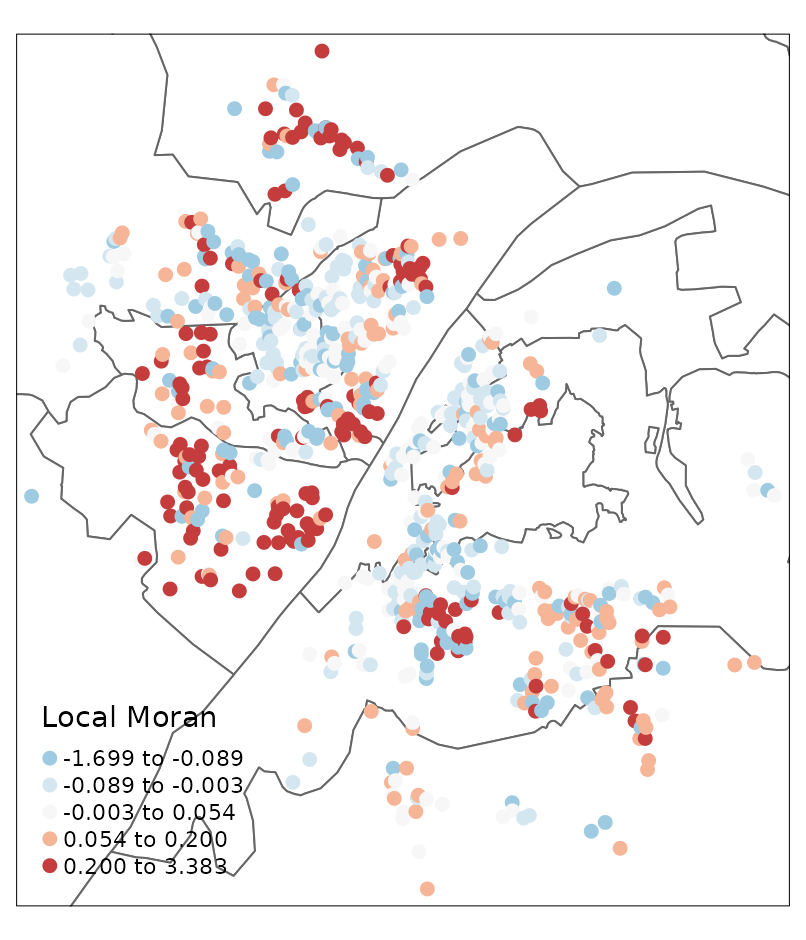

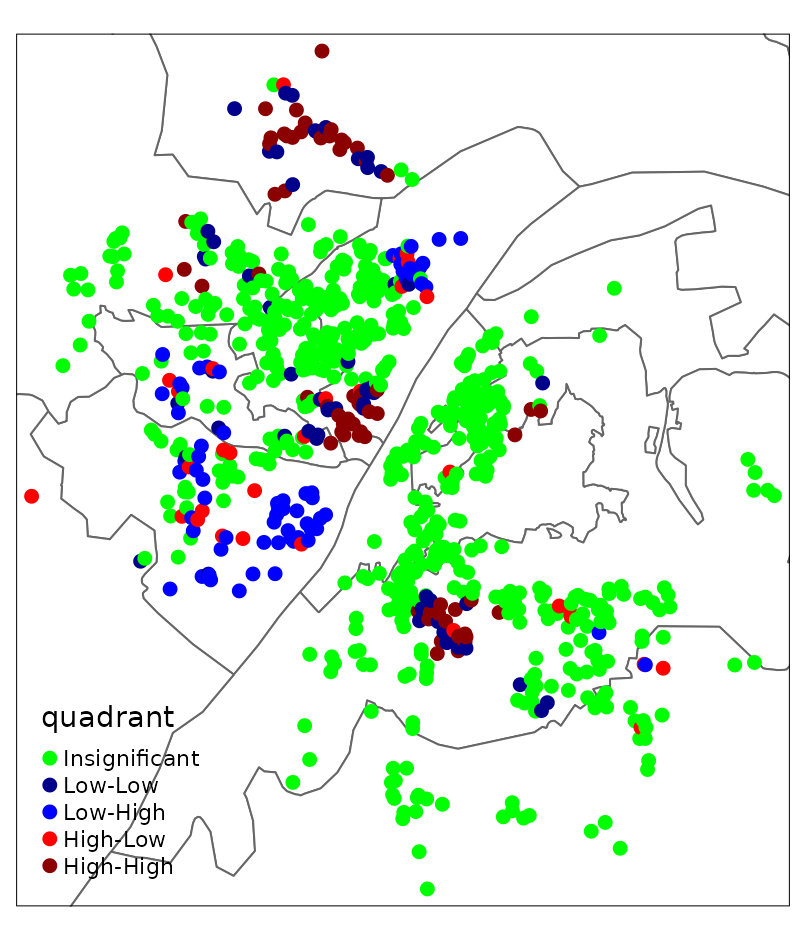

whhp_hgwr_res_localmoran <- localmoran(whhp_hgwr_res$res.abs.mean, whhp_hgwr_res_listw)

wh_basemap +

tm_shape(cbind(whhp_hgwr_res, whhp_hgwr_res_localmoran), is.master = T) +

tm_dots(col = "Ii", size = 0.2, palette = "-RdBu", style = "quantile",

midpoint = 0, title = "Local Moran")

whhp_hgwr_res$res_c <- scale(whhp_hgwr_res$res.abs.mean, scale = F)

whhp_hgwr_res$lmoran_c <- scale(whhp_hgwr_res_localmoran[,1], scale = F)

whhp_hgwr_res$quadrant <- with(whhp_hgwr_res, {

quadrant <- integer(length(res_c))

quadrant[res_c < 0 & lmoran_c < 0] <- 1

quadrant[res_c < 0 & lmoran_c > 0] <- 2

quadrant[res_c > 0 & lmoran_c < 0] <- 3

quadrant[res_c > 0 & lmoran_c > 0] <- 4

quadrant[whhp_hgwr_res_localmoran[,5] > 0.1] <- 0

as.factor(quadrant)

})

LISA_palette <- list(

"Insignificant" = "green", "Low-Low" = "darkblue", "Low-High" = "blue",

"High-Low" = "red", "High-High" = "darkred"

)

tm_layout(aes.palette = "cat") +

wh_basemap +

tm_shape(whhp_hgwr_res, is.master = T) +

tm_dots(col = "quadrant", size = 0.2,

palette = as.vector(unlist(LISA_palette)),

labels = names(LISA_palette))

It can be seen that most residuals are not spatially clustered. Most of those showing spatial clusters locate near the boundary of this study area.